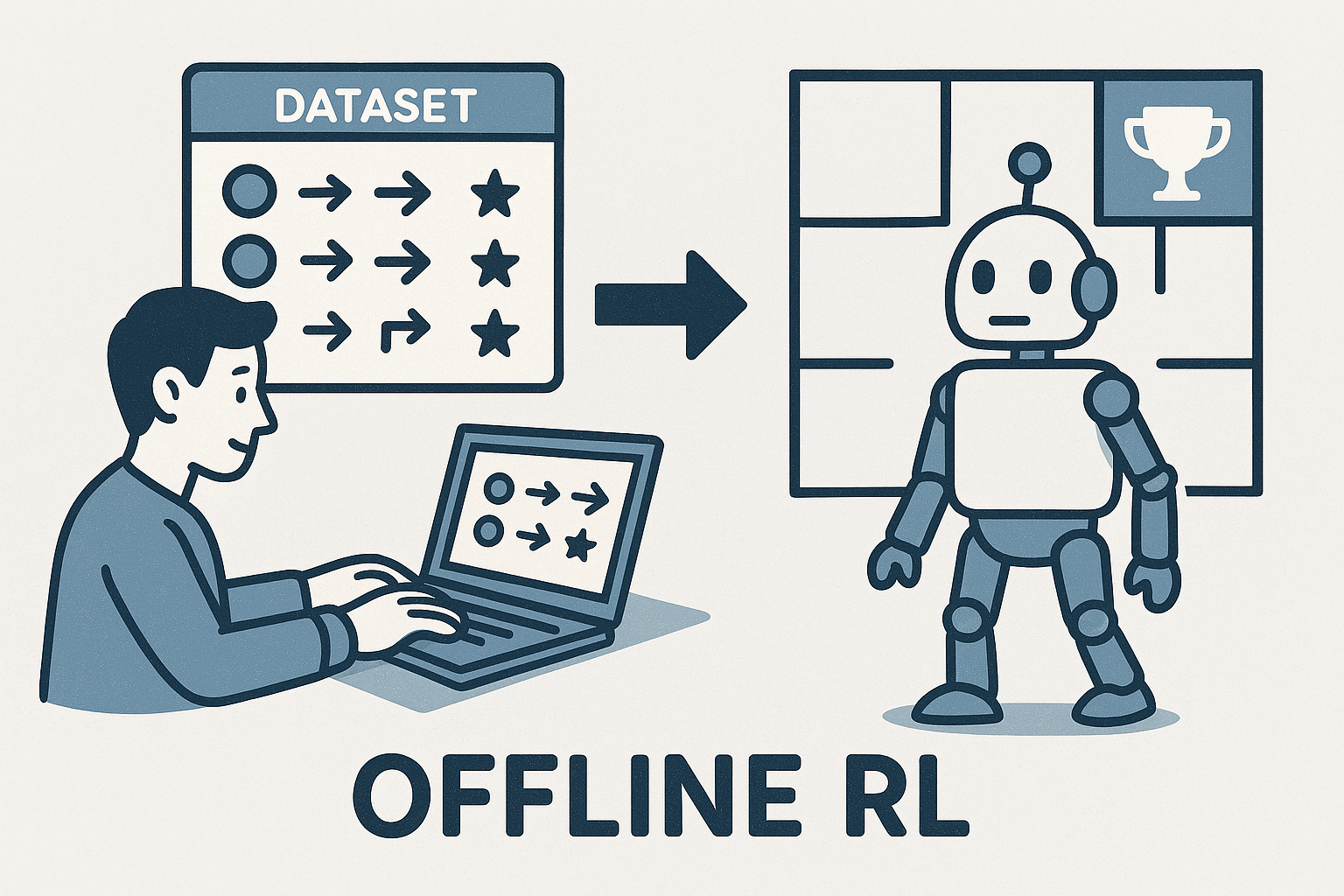

Offline Reinforcement Learning

Offline Reinforcement Learning (Offline RL) is a branch of reinforcement learning where an agent learns a policy solely from a fixed dataset of past interactions, without any further access to the environment during training. This approach relies on trajectories collected by one or more behavior policies, which may not be optimal. A key challenge in offline RL is distributional shift—when the learned policy suggests actions not well represented in the dataset, leading to unreliable predictions. Offline RL is especially important in domains where real-time data collection is costly, risky, or impractical, such as healthcare, robotics, finance, and recommendation systems, enabling safe and efficient learning from historical data.

Selected publications (5)

- A Principled Path to Fitted Distributional Evaluation

- A Fine-grained Analysis of Fitted Q-evaluation: Beyond Parametric Models

- Projected State-action Balancing Weights for Offline Reinforcement Learning

- Off-Policy Evaluation for Episodic Partially Observable Markov Decision Processes under Non-Parametric Models

- Reinforcement learning for individual optimal policy from heterogeneous data